I often hear people talking about KPIs (key performance indicators) as if that is just another term for metrics. Is that true? Strictly speaking, no. All KPIs are metrics, but not all metrics are KPIs. So, what’s the difference?

According to Wikipedia, a metric is “a measure for quantitatively assessing, controlling or selecting a person, process, event, or institution”. Most activities in IT systems development can be effectively managed with, typically, a combination of three to five different metrics at any one time.

In the Capability Maturity Model Integration (CMMI), a KPI is “a metric using which the progress or fulfilment of vital goals or critical success factors can be measured and monitored” and is used to measure and monitor a “key performance area”. The clue to the difference lies in the US English usage of the word ‘key’ to mean ‘very important’. A KPI is a special type of metric: one which gives the manager of an organizational unit a particularly powerful insight into its overall performance and whether or not that is improving. Management consultants have suggested that no more than three KPIs should be needed to manage any one organizational unit, no matter how many activities it performs.

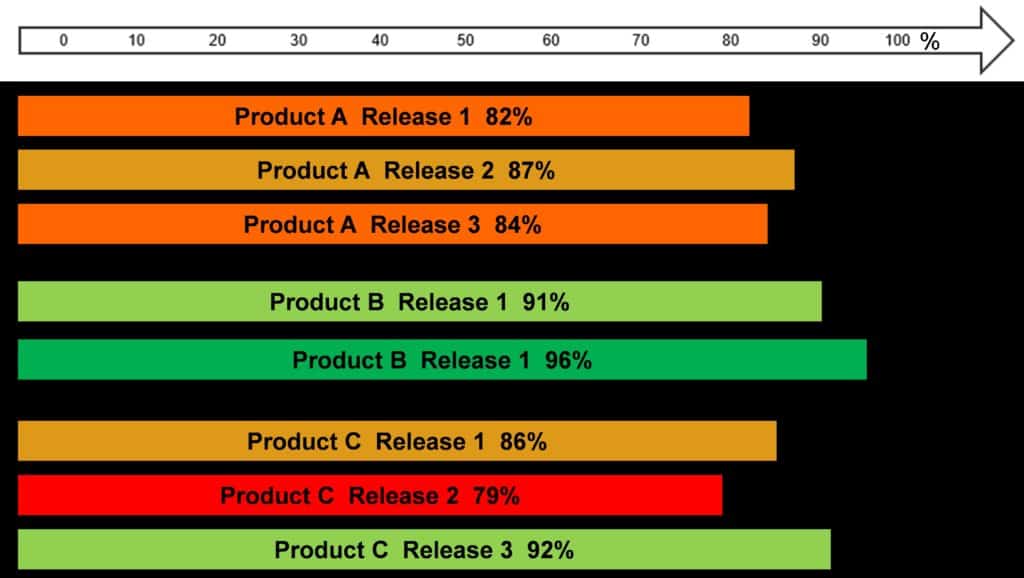

CMMI also insists that, to qualify as a KPI, a metric must have an associated performance target. This gives it special power, because once met the target can be stretched a little – not too much, just enough for the extra to be achievable – and then we are on the road to continuous process improvement. Such targets can be used as incentives to greater achievement. Achievement of a target can be the basis of some kind of reward, and its very existence can generate healthy competition between teams. Below, for example, is a comparison of the defect detection effectiveness of three teams across their product releases, which can be put (as I have done) on a wiki page for all to see. The target was 90% and the colours speak for themselves.

The three most helpful KPIs for any QA function are usually

- Defect Detection Effectiveness (DDE)

- Cost of Quality (CoQ)

- Phase Containment

DDE and CoQ have been discussed in previous articles. Phase Containment measures the extent to which defects are found and fixed in the same application development lifecycle phase in which the error that caused them was made, so defects in, for example, the requirements specification are found and fixed before the requirements are approved and do not “escape” or “leak” into the solution design phase. 100% phase containment from requirements capture all the way through component integration testing would mean that all your system tests pass first time! If that’s an interesting, if unlikely, prospect, watch out for a future article about how it works.

Author: Richard Taylor

Richard has dedicated more than 40 years of his professional career to IT business. He has been involved in programming, systems analysis and business analysis. Since 1992 he has specialised in test management. He was one of the first members of ISEB (Information Systems Examination Board). At present he is actively involved in the activities of the ISTQB (International Software Testing Qualifications Board), where he mainly contributes with numerous improvements to training materials. Richard is also a very popular lecturer at our training sessions and a regular speaker at international conferences.